When OpenAI quietly confirmed its partnership with Broadcom this week, it wasn’t just another supply-chain deal, it was a statement of intent.

The company that once relied on Nvidia’s GPUs to power ChatGPT now wants to design its own brain, a fleet of custom AI processors built for a decade of exponential demand. Broadcom will manufacture the chips while OpenAI leads the design. Together, they plan to deploy 10 gigawatts of computing capacity by 2026, enough to rival the electricity use of several million U.S. homes.

To most analysts, that number sounds unreal. To Sam Altman, it’s simply the next stage of AI’s expansion.

Also Read: ChatGPT 6 Release Date – What We Know So Far.

The Meaning Behind the Move

For months, Altman has said that progress in AI will soon be limited not by algorithms but by energy and compute supply. By creating its own chips, OpenAI joins a small circle of companies, Google, Amazon, and Apple, that control both software and silicon.

But unlike them, OpenAI’s focus isn’t on smartphones or ads; it’s on the infrastructure for intelligence itself.

This partnership also marks a turning point in the global chip race. Nvidia still dominates, but Broadcom’s entry into large-scale AI infrastructure shows how quickly the ecosystem is diversifying.

A Push for Independence

The deal hints at OpenAI’s push for greater independence from suppliers, a critical step if it wants to scale beyond ChatGPT into fully autonomous AI systems. Analysts say OpenAI’s ability to fund such an ambitious rollout reflects extraordinary investor confidence in the $500 billion startup.

If completed, this project could become the world’s most powerful AI computing hub, redefining how energy, chips, and algorithms intersect in the next chapter of Silicon Valley innovation.

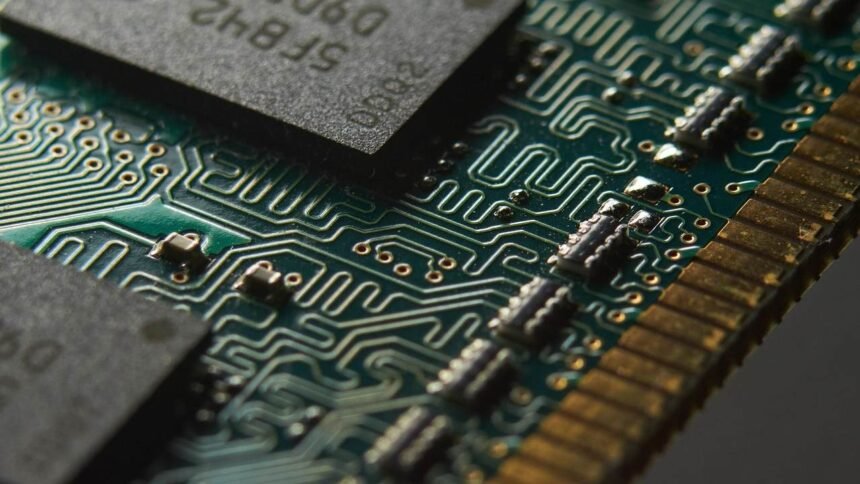

A detailed close-up of a semiconductor chip, symbolizing OpenAI’s next phase in AI hardware development. Image via Pixabay.

Also Read: Is ChatGPT 7 Really Free? Here’s What OpenAI Actually Offers for Free in 2025.