When Michael Dell speaks, the tech world listens.

This week, while celebrating record AI-driven growth, the Dell Technologies CEO quietly dropped a sentence that made analysts pause: “At some point there’ll be too many AI data centers.”

It sounded casual, almost offhand, but coming from the man whose company builds the machines powering OpenAI and CoreWeave, it felt like a signal.

The Boom No One Wants to End

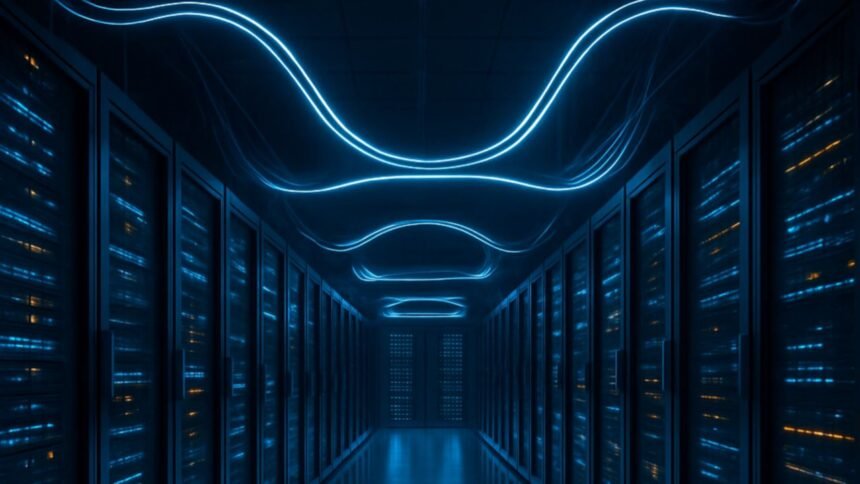

Right now, AI infrastructure is the hottest bet in tech.

Every major player, from Microsoft and Google to Amazon, is racing to build larger, faster, more power-hungry data centers. Dell’s AI servers, running Nvidia’s latest Blackwell Ultra chips, are already sold out for months.

But Dell’s remark suggests a looming question: how long can this expansion continue before physics, energy, or economics push back?

The Energy Equation No One Solved Yet

OpenAI’s recent partnership with Nvidia to build 10 gigawatts of new data centers equals the yearly power use of about 8 million U.S. homes.

That’s not a typo — it’s a warning.

According to the U.S. Energy Information Administration, the country will add only 63 gigawatts of new power capacity in 2025.

If AI data centers claim even a fraction of that, what’s left for everything else?

Dell himself admitted that customers are already delaying server deliveries because “we won’t have power in the building to support it.”

The Real Insight: When Abundance Becomes a Bottleneck

The irony of the AI era is striking, unlimited ambition built on limited electricity.

The next trillion-dollar problem in tech might not be chips or GPUs, but the power grid itself.

Dell’s comments may mark the start of a new conversation, not about how fast AI can grow, but how long the infrastructure can keep up.

The age of AI expansion is here.

But the age of AI efficiency might be closer than anyone expects.

Also Read: Apple’s Next Big Slip? M5 iPad Pro Spotted Early — But Was It Really an Accident?.