The Return of a Technology That Never Really Left

AI deepfakes are everywhere again and this time, they’re almost impossible to ignore.

- The Return of a Technology That Never Really Left

- How We Got Here: The New Generation of AI Video

- What’s changed most is access.

- The Emotional Realism Problem

- The New Deepfake Landscape

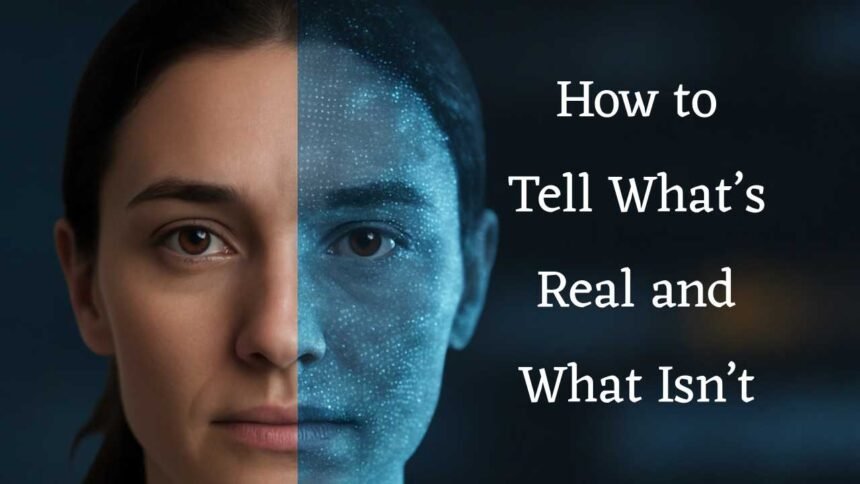

- How to Tell What’s Real — and What Isn’t

- You can also use context clues:

- Big Tech’s Race to Reinforce Reality

- But none of these measures are foolproof.

- What the Law Says and Doesn’t

- The Future of Truth Online

- Final Thoughts

From viral celebrity “interviews” that never happened to political videos convincing enough to fool millions, artificial intelligence has quietly blurred the line between truth and fabrication.

A few years ago, it was easy to spot a fake. Skin tones looked plastic, eyes blinked out of sync, and voices felt lifeless. In 2025, however, deepfakes don’t just look real — they feel real. That’s the part that’s shaking people’s confidence in what they see online.

How We Got Here: The New Generation of AI Video

The latest deepfake boom isn’t a coincidence. Tools like OpenAI’s Sora, Google Veo, and Runway Gen-3 have taken AI video generation to a level of detail once thought impossible.

Micro-expressions, camera jitter, realistic lighting, and natural background sound, all are now part of the package.

What’s changed most is access.

Anyone with a phone can now generate a hyper-realistic video in seconds. The technology that once required specialized GPUs is now cloud-based, fast, and often free to try.

That democratization has made AI video creation go mainstream — for creators, marketers, and scammers alike.

The Emotional Realism Problem

One reason people find today’s deepfakes so disturbing is that they’re emotionally convincing.

Researchers at Stanford found that viewers often respond more strongly to synthetic emotional cues than to real ones.

In other words, if a deepfake “feels” right, our brains tend to accept it as truth, even if logic tells us it’s fake.

This psychological vulnerability explains why fake celebrity statements or doctored political clips spread faster than official corrections. Once emotion takes over, verification becomes secondary.

The New Deepfake Landscape

Deepfake technology isn’t entirely villainous. In fact, much of its current use is positive.

Filmmakers use it for de-aging actors, historians for recreating lost archives, and educators for language-dubbing and accessibility.

But the same capability can turn dangerous overnight.

Here’s where deepfakes are now creating real-world tension:

Misinformation: Manipulated clips used to distort political or social narratives.

Identity Fraud: Voice and face cloning used in scams and impersonation calls.

Non-consensual content: The fastest-rising misuse, with personal and reputational damage that’s often irreversible.

AI celebrity culture: Fans recreating “lost” performances or interviews, sometimes crossing ethical boundaries.

How to Tell What’s Real — and What Isn’t

Even as AI improves, subtle flaws still give away most deepfakes.

Experts suggest looking for a few consistent signs:

- Unnatural lighting – shadows don’t align perfectly.

- Softened edges – especially around hair, jewelry, or glasses.

- Voice mismatch – slightly too clean or perfectly balanced audio.

- Emotion lag – expressions that arrive a split-second late.

You can also use context clues:

- Did the clip come from a verified source?

- Are multiple outlets covering the same footage?

- If not, pause before you share it could be synthetic.

Big Tech’s Race to Reinforce Reality

The world’s largest tech firms are rushing to rebuild trust in digital media.

Projects like C2PA (Content Provenance and Authenticity) embed metadata directly into videos, proving where and when they were created.

Platforms such as YouTube, Instagram, and X are experimenting with AI-generated labels and digital watermarks.

But none of these measures are foolproof.

Watermarks can be removed. Labels can be cropped.

And once a fake goes viral, the correction rarely catches up.

Still, this race to authenticate content represents the first serious step toward a long-term solution.

What the Law Says and Doesn’t

U.S. legislation on deepfakes remains fragmented.

Some states, like California and Texas, have passed laws targeting political or pornographic deepfakes, while others rely on older privacy and defamation statutes.

Federal lawmakers are pushing for a broader AI Transparency Act, which would require disclosure of synthetic media used in campaigns or ads.

Until that arrives, most victims must rely on platform takedowns and civil complaints a slow and imperfect process.

The Future of Truth Online

So where does this end?

Experts call it the “verification era”, a future where authenticity depends not just on seeing, but on checking.

Newsrooms will soon embed digital signatures in footage. Social networks will display source metadata by default. And consumers will rely on browser tools that can instantly analyze whether a video has been altered.

But even with all that, one truth remains: technology will always evolve faster than regulation.

The only reliable filter left is critical thinking, learning to question before we believe.

Final Thoughts

Deepfakes were once a novelty. Today, they’re a mirror, reflecting both the brilliance and the recklessness of our AI age.

They remind us that the next battle for truth won’t be fought in headlines or hashtags, it’ll be fought frame by frame.

Also Read: Sora Just Did What Even ChatGPT Couldn’t at Its Debut.